Ever faced a situation where you were using an AI tool to get some information, and it gave incorrect results? This is what AI hallucinations are. And guess what? AI hallucinations in enterprises happen more often than you think. Hallucinations happen when AI tools are given inputs that go beyond their training data.

But when does it happen? AI tools are trained to respond to every question being asked, and if they cannot find and fetch the exact answer to that question, what they do is generate outputs by guessing the most probable next words.

Naturally, the answers lack credibility, and enterprises depending on them to make decisions end up suffering from not just financial, but legal and reputational damage. Now the question is, is there a way to mitigate AI hallucinations?

If that’s what crossed your mind, then you’re at the right place. In this blog, we will walk you through everything from what AI hallucinations are to how enterprises can detect and mitigate them. Let’s begin!

What are AI Hallucinations in Enterprise

As aforementioned, AI hallucinations are situations in which an AI model produces incorrect output and presents it as accurate. This is why many AI research tools include a disclaimer stating that AI can make mistakes and that users should verify the output before relying on it.

Now, in enterprise environments, AI hallucinations are not just mistakes; they are risks that can not only result in significant financial losses but can also attract legal penalties. If AI models are not aware of certain answers, they do not admit it. Instead, they hallucinate, guess the next word relevant, and present it as the output, just as they are trained to do.

Let’s get a better understanding of AI hallucinations in the enterprise through an example.

Imagine an enterprise with multiple departments using AI for different purposes. If AI gives some incorrect information to each department, and every department uses it for making future decisions, it can lead to an irreversible blunder, like a flawed product launch, a wrong financial call, or even a legal violation.

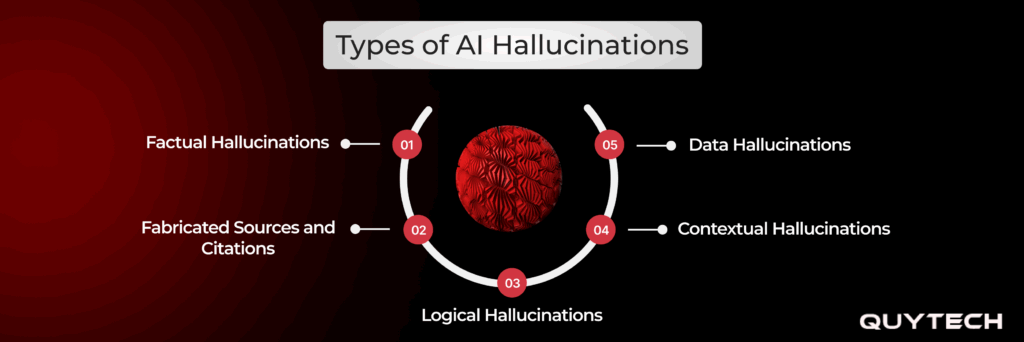

Types of AI Hallucinations

AI hallucinations are mainly divided into 5 types. These include factual, fabricated citations, logical, contextual, and data hallucinations. Let’s understand them in detail:

1. Factual Hallucinations

Factual hallucinations, as the name implies, are the type of hallucinations where AI represents something factually incorrect as true information. Factual hallucinations generally occur when the AI model does not have relevant information around an input in a verified database, so it simply places words that might be the right output, but reflects it as accurate.

2. Fabricated Sources and Citations

Similar to factual hallucinations, fabricated sources and citations also give incorrect information, but by going a step further, they add credibility to the incorrect information by providing sources and citations for the output. But since the information isn’t correct, the citations and sources are also nonexistent. AI models learn through training data, which often has citations mentioned, so they naturally adapt and give fake ones to make the incorrect information look credible.

3. Logical Hallucinations

Logical hallucinations are those situations where the reasoning of AI models breaks down. Here, it may have the right information and facts needed to give the output, but the conclusion it draws is wrong. The reason why AI models hallucinate logically is their inability to actually understand the cause-and-effect relationship between things. The reasoning they carry out is not similar to what humans do. AI models simply follow the pattern of how conclusions are typically written and give output.

4. Contextual Hallucinations

Contextual hallucinations occur when AI gives a correct general output, but irrelevant to what was actually asked of it. In such hallucinations, AI models fail in understanding what the output means and respond based on keywords or the broader topic rather than diving into specific details. For example, a user asks AI about the right dosage of a certain medicine for a child, and AI responds by giving the general dosage without being specific about what was actually asked.

5. Data Hallucinations

Data hallucinations refer to incorrect numbers, figures, and statistics shown as accurate information by AI models. The reason why data hallucinations occur is that AI models are trained with patterns, not mathematical equations. This makes it hard for them to give the right output when it comes to numerical areas. Naturally, data hallucinations make it hard for teams to work with AI models, as a mistake with numbers carries a strong sense of authority, and if they are misstated, it can seriously damage trust and lead to flawed decisions.

Handpicked For You: Using AI for Software Quality Assurance: Benefits, Use Cases, and More

Real-World Impact of AI Hallucinations in Enterprises

The real-world impact of AI hallucinations in enterprises includes financial losses caused by making decisions based on information given by hallucinating AI tools. It also attracts legal as well as regulatory consequences. Here’s a dedicated section explaining these impacts in depth:

1. Financial Losses

As mentioned already, enterprises utilize AI tools to analyze data and generate insights. These insights are then utilized to make multiple decisions like pricing strategies, investment decisions, resource planning, and so on. When such important decisions are made through insights generated from incorrect or fabricated data, it results in financial losses. These losses include not just the resources wasted on these decisions, but the reworking and missed opportunity costs as well.

2. Legal Consequences

AI hallucinations don’t just bring in financial losses; they bring legal consequences as well. We all know that enterprises operate in governed environments that supervise every action and decision made, and respond to them accordingly. When organizations depend on incorrect data for making legal decisions, such as compliance reports, documentation, etc., the responsibility falls on them, not the tool they used.

3. Reputational Damage

As is quite obvious, AI hallucinations can impact organizational image in the market. When an enterprise utilizes incorrect information generated by hallucinating AI tools, and it reaches its audience, it creates a negative perception. The impact deepens when such information is shared repeatedly. Instances like these are what impact the credibility of an enterprise and make it harder to attract or retain clients.

4. Operational Disruption

Organizations use AI tools to enhance the efficiency of their overall operations. But AI hallucinations do the opposite of that. It gives output that, instead of relieving teams of work, adds more to it. AI hallucinations make teams work on verifying outputs, correcting mistakes, and even redoing it all entirely. This disrupts the flow of operations and heavily impacts productivity.

5. Regulatory and Compliance Risk

For enterprises in regulated industries such as finance, healthcare, and legal, AI hallucinations pose a regulatory and compliance risk. As is obvious, such enterprises are continuously watched by regulators. Now, when AI tools hallucinate and provide fabricated data to enterprises, which is later acted on, enterprises end up unknowingly violating rules and facing penalties.

You Might Also Like: Hybrid Vs. Multi-Agents: What Enterprises Should Choose in 2026

How Enterprises Can Detect AI Hallucinations Effectively

Enterprises can detect AI hallucinations effectively by cross-verifying outputs with trusted sources. Other methods include confidence scoring, human-in-the-loop review, and consistency checks. Let’s dive deeper into these methods:

1. Cross-Verification with Trusted Sources

One of the simplest methods to detect AI hallucinations is cross-verification. In this method, a system is set up to verify output as it is generated against the trusted databases. Once any deviation is encountered, cross-verification flags it immediately.

2. Confidence Scoring and Uncertainty Signals

Confidence scoring refers to the method by which AI outputs can be analyzed based on how confident the AI tool is about them. If the tool acts strongly, then the output can be treated as normal. However, if the tool shows uncertainty signals or suggests human review, then that output can be flagged and reviewed before action is taken.

3. Human-in-the-Loop Review

While the hype around AI automation is real, it does not cover the fact that human review is necessary to prevent hallucinations. Human-in-the-loop is the most basic method, which simply means that while the AI tools give output, there should always be a human reviewing it to ensure that the output is credible. This method is highly utilized in regulated environments and is also the ideal balance of automation with human effort.

4. Output Consistency Checks

Another method that organizations can use to prevent AI hallucinations is checking output consistency. AI tools often generate outputs that contradict their own response. Implementing output consistency checks establishes a system that checks the consistency of the responses AI tools give. If the responses lack consistency, like one statement in the beginning stating something completely different from what’s said in the conclusion, it is flagged and verified before taking any action.

5. Rule-Based and Constraint Validation

The rule-based and constraint validation method is one where enterprises can create boundaries and rules on the type of output that is accepted and the type that isn’t. For example, if an AI tool is being used in a financial context, then the organization can set rules like if the numbers fall in a certain range, then it is an acceptable output, but if it goes beyond that, it gets flagged. These rules create boundaries that filter out the outputs AI tools give out of hallucination.

Similar Read: How AI Voice Analytics Improves Patient Experience in Healthcare Interactions

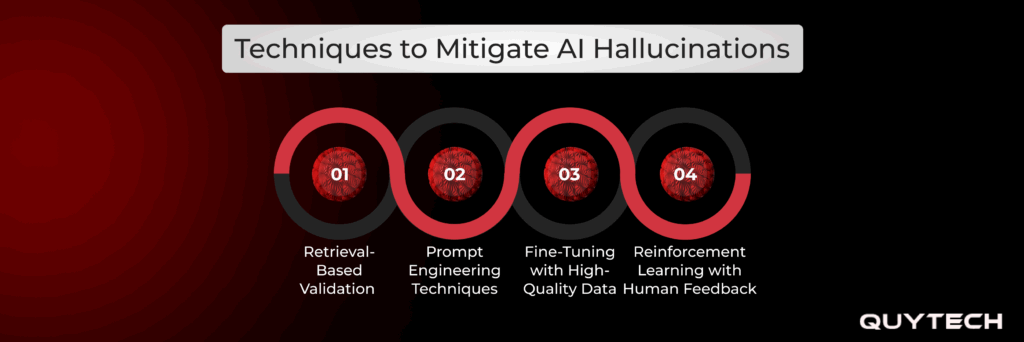

Techniques to Mitigate AI Hallucinations

Now that you are familiar with the methods of detecting AI hallucinations, let’s walk you through the techniques to mitigate AI hallucinations:

Retrieval-Based Validation

Retrieval-based validation is the technique that helps in mitigating the risks of AI hallucinations by connecting AI tools with enterprise-relevant databases. It ensures that every time AI tools respond, they retrieve information from trusted knowledge bases. It eliminates hallucination as AI tools can simply get the needed information from the datasets instead of generating responses from what they are trained on.

Prompt Engineering Techniques

Prompt engineering techniques refer to the practice of writing the instructions given to AI tools in detail. This technique involves writing descriptive and detailed prompts without leaving gaps, as AI hallucination occurs in areas with gaps only. When instructions are given vaguely, AI tries to fill in information based on what it thinks the answer probably is. But prompt engineering eliminates that, leaving no gap for hallucination responses.

Fine-Tuning with High-Quality Data

Fine-tuning refers to the simple practice of training AI models on carefully selected, highly relevant datasets. In this technique, AI tools are not trained on general datasets. Instead, they are trained with data curated for a specific industry. This technique fine-tunes the AI models with information specific to the organization, which helps these models in giving precise responses without hallucinating.

Reinforcement Learning with Human Feedback (RLHF)

Reinforcement learning with human feedback refers to the AI hallucination mitigation technique that carries out phased training of AI models. In its phases, the technique begins by letting the AI model generate responses, followed by human review and training based on the feedback. This cycle repeats and trains the AI model to give responses accurately, all while establishing guardrails.

People Also Like: How AI Voice Analytics Improves Patient Experience in Healthcare Interactions

Partner with Quytech for Grounded, Governed AI Solutions

In times when AI adoption is becoming a necessity, organizations wanting to implement it need not just advanced AI models but also ones that are free of hallucinations. And that’s exactly what Quytech brings. With over 16 years of experience in serving tailored AI solutions across industry verticals, Quytech trains AI models with highly structured, relevant, and quality datasets.

We place strong emphasis on implementing governance and guardrails in the AI models we build and train. Following this approach, our team delivers AI solutions that offer highly precise and quality output. We also embed validation mechanisms within the AI systems. With practices like these, Quytech builds AI solutions that are reliable, accurate, and aligned with organizational needs.

Conclusion

As is widely known, AI is a very powerful tool for enterprises. But that doesn’t cover the fact that it comes with its own risks as well, and hallucinations are among the most significant ones. AI hallucinations in enterprises are incorrect information generated by AI tools, confidently presented as accurate.

Not only does it sound serious, but it also impacts enterprises significantly. When AI solutions that lack governance and guardrails are utilized by enterprises for decision-making, it can lead to AI hallucinations that can bring in heavy financial losses. What’s more is that if these outputs are exposed externally, they can attract legal and regulatory complications as well.

But like any other problem, AI hallucinations can also be detected and mitigated. They can be detected by implementing methods like output cross-verification, confidence scoring, and checking consistency. As for mitigation, techniques like retrieval-based validation, prompt engineering, and fine-tuning help enterprises in keeping AI hallucinations at bay.

FAQs

Yes, AI hallucinations in enterprises can occur even if the data quality is good. Although good data reduces the hallucination risk, it does not eliminate it, because there are other factors as well that impact the results AI tools give.

Yes, responsible AI in enterprises can help in managing hallucination risks. This is because it involves practices like human oversight, auditing, and establishing governance frameworks. All of these help in catching hallucinations before they are acted on.

It’d be better to say that AI hallucinations are more consequential than common in regulated industries. This is because such industries have to follow numerous regulations and are also highly supervised, so if decisions are made based on incorrect output, it can invite legal consequences.

Some architectural decisions that help in minimizing hallucination risks are:

1. Connecting with trusted knowledge bases through RAG

2. Setting rule-based bounds and

3. Using highly relevant datasets for training

In AI-driven automation systems, hallucinations can introduce numerous risks. For example, AI hallucination can automate incorrect transactions, leading to financial loss.